The Central Government has notified amendments to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021 to handle modern digital challenges. The new regulations, called the Information Technology Amendment Rules, 2026, will officially come into effect from February 20, 2026. The main focus of these changes is to put strict checks on content generated by Artificial Intelligence (AI), especially deepfakes and misleading information that spreads quickly on social media.

Mandatory labeling for AI content

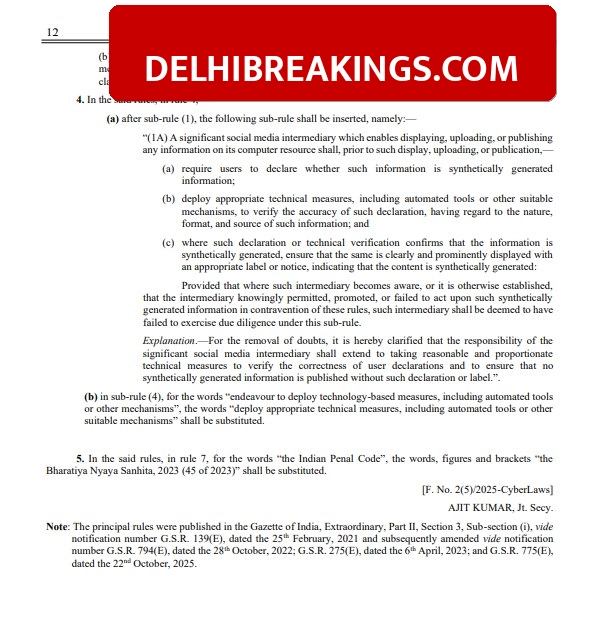

Under the new rules, social media platforms and other digital intermediaries must clearly label any content that is created or modified using AI. The government has defined ‘Synthetically Generated Information’ to include any audio, video, photo, or other data made through algorithms. Platforms are also required to use technical tools to identify such content and add permanent digital markers or metadata to it. This ensures that users know if a video or image is real or computer-generated.

Stricter time limit for action

The government has significantly tightened the timeline for removing illegal content. If a court or a government agency reports any unlawful or misleading AI-generated content, the platform must remove it within 3 hours. Previously, companies had 36 hours to take action. Platforms also have to warn their users every three months about the rules and inform them that sharing illegal AI content can lead to police action under the Bharatiya Nyaya Sanhita 2023.